I’ve been dealing with a rather persistent comment spam issue with my blog. Akismet seems to ignore this particular type of trackback spam and has let hundreds slip through over the last few months. Since my blog really isn’t that busy, it is very easy to identify the spam. I can’t turn off trackbacks as I have gotten trackbacks from other people referencing my site showing how they solved a problem.

A couple weeks ago I installed Simple Trackback Validation with Topsy Blocker which has done a good job of catching the ones that Akismet seems to have problems detecting. As I run a rather complex setup for testing my plugins, I submitted a bug fix which the author promptly installed. I don’t know why Akismet doesn’t detect keyword keyword… as potential spam, but, almost every trackback Akismet has missed, follows that pattern and so far, the other plugin has caught every single one.

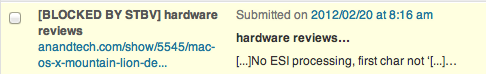

This morning, I noticed two comments had been posted in the last seven hours, and, when I looked, I saw:

While my site isn’t that busy, it ranks fairly well on some search phrases and social circles. The most popular post on my blog was written over two years ago and was for Mac OS/X Snow Leopard. Other posts have been more popular over a thirty day period, but, that post has stood the test of time even though it is now two generations of Operating Systems behind.

But, I do have sites that do trackback spam from that particular page quite frequently, and, Akismet misses them about 85% of the time. You wouldn’t know from the Akismet graphs, it claims a much higher success rate, almost the inverse of the fail rate.

I know when I get links from Anandtech.com, I do often check them to make sure they are linking, and, in a few spot checks in the past, they were, but, those links appear to have been cleaned from their forums and the two posts where I remember them being listed. Today however, the trackback was on a very new article which had no relation to the page it linked to, and obviously, no link from their site pointed to my site.

I looked back through the approved comments and found 71 other trackbacks, investigated a number of pages, and, as you might suspect, my link wasn’t present anywhere.

This is where the analysis turns a bit sinister. Why did they pick a page that detailed an issue with Varnish and gzip compressed pages to link to an article Anandtech wrote yesterday? Age of the post? The original post was from Dec 2009, though, it is the outlier in the stats.

Upon looking at thirty of the trackbacks, a curious pattern emerged. Since adding the social media buttons for Google+, Twitter and Facebook, I’ve had a quick metric to gauge post popularity, and, lo and behold, Anandtech is targeting posts that have high tweet counts with the exception of the original outlier.

The original post is linked to a post that deals with Social Game Design which is also a popular post. There may have been a trackback on that page which I deleted ages ago and their trackback bot just spidered it.

Or, it could be completely dumb and just taken the urls from topsy.com if they looked far enough back through my history – except for the original outlier.

But Anandtech, Welcome to the Comment Blacklist.